1.4. llms#

See related papers in the 📌 llm basics and 📌 interpretability pages.

1.4.1. prompting#

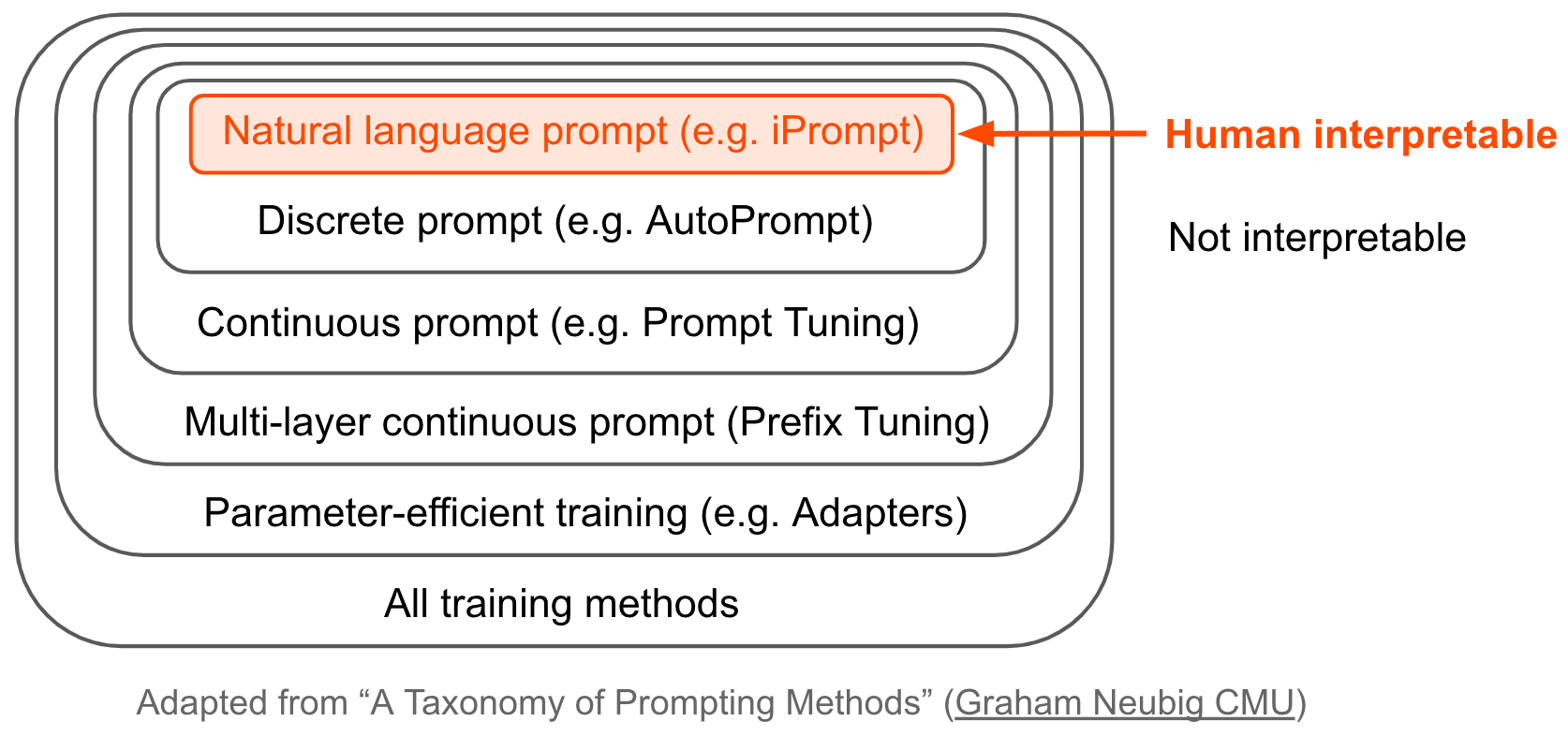

Over time, ML has bounced from feature-engineering -> architecture engineering -> prompt engineering (nowadays, it’s data engineering)

Pre-train, Prompt, and Predict: A Systematic Survey of Prompting Methods in Natural Language Processing (liu…neubig, 2021)

Overview figure

early prompting papers

LAMA: LMs as Knowledge Bases? (petroni…riedel, 2019) - use fill-in-the-blank (cloze) prompts for extracting knowledge from LLMs

create LAMA probe - dataset of (subject, relation, object) triplets with templates – find that BERT can recall these relations

How to Query LMs? (adolphs et al. 2021) - query LLMs by example (e.g. “Ronaldo plays for Portugal. Who does Neuer play for?”)

How Can We Know What LMs Know? (jiang … neubig, 2020)

mining-based and paraphrasing-based methods to automatically generate high-quality diverse prompts

ensemble methods to combine answers from different prompts (e.g. avg logits and more)

Noisy Channel LM Prompting for Few-Shot Text Classification (min et al. 2022)

Querying \(P(question|answer)\) with Bayes rule outperforms standard querying \(P(answer|question)\)

1.4.1.1. (auto)prompting#

natural-language prompting

iPrompt: Explaining Patterns in Data with LMs via Interpretable Autoprompting (singh, morris, …gao, 2022)

APE: LLMs Are Human-Level Prompt Engineers (zhou…ba, 2022)

similar to iPrompt, (1) propose prompt candidates with an LLM, (2) score the prompts by the accuracy they yield when using another LLM and (3) regenerate similar prompt candidates

experiments on instruction induction datasets + truthful QA

FluentPrompt: Toward Human Readable Prompt Tuning (shi, …, zettlemoyer, 2022) - use langevin sampling + fluency constraint to generate prompt

experiments relatively weak: 3 sentiment datasets + autoprompt is the only baseline

APO: Automatic Prompt Optimization with “Gradient Descent” and Beam Search (pryzant…zeng, 2023) - update prompts based on errors made by previous prompts

OPRO: LLMs as Optimizers (yang…quoc le, zhou, & chen, 2023) - add in past prompts with their scores during optimization

Promptbreeder: Self-Referential Self-Improvement Via Prompt Evolution (fernando…rocktaschel, 2023) - simultaneously improve prompts with LLM + improve the mutation-prompts the LLM uses to mutate the prompts

Connecting LLMs with Evolutionary Algorithms Yields Powerful Prompt Optimizers (guo…yang, 2023)

PromptAgent: Strategic Planning with LMs Enables Expert-level Prompt Optimization (wang…hu, 2023) - iterate on prompt errors using MC tree search

LMs as Black-Box Optimizers for Vision-LMs (yu…pathak, & ramanan, 2023)

Automatic Prompt Optimization with “Gradient Descent” and Beam Search (pryzant…zeng, 2023) - LLM computes “gradient” by describing error

Are LLMs Good Prompt Optimizers? (ma…huang, 2024) - critique that models often struggle

discrete prompting

AutoPrompt: Eliciting Knowledge from LMs with Automatically Generated Prompts (shin…sameer singh, 2020)

select prompts from a fixed set of tokens (resulting prompts are not coherent)

Universal Adversarial Triggers for Attacking and Analyzing NLP (wallace…sameer singh, 2019 ) - find input-agnostic sequences of tokens that trigger a model to produce a specific prediction when concatenated to any input from a dataset

RLPrompt: Optimizing Discrete Text Prompts with Reinforcement Learning (deng…hu, 2022)

LM-BFF: Making Pre-trained LMs Better Few-shot Learners (gao et al. 2020) - uses T5 to generate (i) template for the task (which might include a whole example or two) + (i) appropropriate label tokens in the vocabulary for the task (suffers from computationally intensive search + sub-optimal discrete space search)

PADA: Example-based Prompt Learning for on-the-fly Adaptation to Unseen Domains (ben-david, …, reichart, 2022)

continuous prompt optimization

Prefix-Tuning: Optimizing Continuous Prompts for Generation (li & percy liang, 2021) – optimizes in continuous space for language generation tasks

learn to map some parameters \(\theta\) through and MLP to generate a starting hidden state \(h_i\) – never actually sends the prefix through the network

P-Tuning: GPT Understands, Too (liu et al. 2021) – use LSTM to generate prompt embeddings (don’t map to tokens)

Control Prefixes for Parameter-Efficient Text Generation (clive, cao, & rei, 2022) - allow for adapting the prefix to each input example

DART: Differentiable Prompt Makes Pre-trained LMs Better Few-shot Learners (zhang…chen, 2022)

reformulating NLP task into differentially optimizing the prompt template + target label (given a pre-trained model)

focus on smaller models (Roberta-large + GPT-2) + few training shots

fluency constraint to ensure association among prompt embeddings

WARP: Word-level Adversarial ReProgramming (Hambardzumyan et al. 2021) - add continous tokens + some task-specific parameters for better generalization

KnowPrompt: Knowledge-aware Prompt-tuning with Synergistic Optimization for Relation Extraction (chen et al. 2021) – incorporate relations, visualize learned prompt vectors with t-SNE

misc

Context-faithful Prompting for LLMs (zhou, shang, poon & chen, 2023) - ask question in clever way to force LLM to follow it

SentiPrompt: Sentiment Knowledge Enhanced Prompt-Tuning for Aspect-Based Sentiment Analysis (zhang et al. 2021) - use sentiment knowledge penalties in the prompt

Meta-learning via LM In-context Tuning (yanda chen…he, 2022) - given new task with new instruction

Prompt Programming for LLMs: Beyond the Few-Shot Paradigm (Reynolds & McDonell, 2021) - define metaprompts as general wrappers around tasks e.g. “This problem asks us to”

Re3: Generating Longer Stories With Recursive Reprompting and Revision (yang et al. 2022) - generate summaries, then expand and revise with prompts

Directional Stimulus Prompting (li, baoling peng, …jianfeng gao, xifeng yan, 2023) - generate hint keywords using small LLM that are put into the prompt when calling large LLM

memory-assisted prompt-editing (madaan…yang, 2022) - allows model to “save things to memory” that get added to prompt when needed

Prompting Is Programming: A Query Language For LLMs (beurer-kellner, fischer, & vechev, 2022)

can benefit from training for promptability

Adapting LMs for Zero-shot Learning by Meta-tuning on Dataset and Prompt Collections (zhong…klein, 2021)

Continued Pretraining for Better Zero- and Few-Shot Promptability (wu…sameer singh, beltagy, 2022)

1.4.1.2. chain-of-thought#

Chain-of-Thought Prompting (wei et al. 2022): in few-shot prompts, don’t just provide answer but also reasoning

model outputs reasoning + answer, leading to improved performance

Self-Discover: LLMs Self-Compose Reasoning Structures (zhou…le…zheng, 2024) - LLMs come up with their own step-by-step structure for a task

Self-Consistency Improves Chain of Thought Reasoning in LMs (wang, wei, schuurmans, quoc le, … zhou, 2022) - use output samples rather than greedy and return the most consistent final answer in the set

Challenging BIG-Bench Tasks and Whether Chain-of-Thought Can Solve Them (suzgun, …, quoc le, …, jason wei, 2022)

self-ask (Press et al., 2022) - LLM asks itself (and then answers) follow-up questions before answering the initial question

Text Classification via LLMs (sun…wang, 2023) - add clues to the prompt

Let’s Do a Thought Experiment: Using Counterfactuals to Improve Moral Reasoning (ma, …, chen, 2023) - counterfactuals help improve CoT

RCOT: Detecting and Rectifying Factual Inconsistency in Reasoning by Reversing Chain-of-Thought (xue et al. 2023)

SelfCheck: Using LLMs to Zero-Shot Check Their Own Step-by-Step Reasoning (miao, teh, & rainforth, 2023)

EchoPrompt: Instructing the Model to Rephrase Queries for Improved In-context Learning (mekala…sameer singh, 2023) - replace let’s think step by step with Let’s repeat the question and also think step by step

Let’s Think Dot by Dot: Hidden Computation in Transformer LMs (pfau, merrill, & bowman, 2024)

Show Your Work: Scratchpads for Intermediate Computation with LMs (nye et al. 2021)

selection inference (creswell et al. 2022) - generate set of facts, then iteratively generate inferences from the facts to yield the final answer

least-to-most prompting (zhou…quoc le et al. 2022) - prompt LLM with context showing how to reduce into subproblems; then LLM sequentially solves the subproblems, using the previous answers

Generated Knowledge Prompting for Commonsense Reasoning (liu…hasjishirzi, 2021) - generate knowledge from an LLM then provide it as additional input when answering a question

maieutic prompting (jung et al. 2022) - generate a tree of all explanation of the form “True, because…”, “False, because…” then query LLM with these as prompts

then use Max-SAT to try to satisfy as many relations between the model explanations as possible to come up with the true answer

1.4.1.3. self-verification#

LM vs LM: Detecting Factual Errors via Cross Examination (cohen et al. 2023)

Thread of papers combating hallucination

verifiers (cobbe et al. 2021) - train model to judge whether an answer and thought are likely to be “valid”

subgoal search (czechowski et al. 2021) - train model to generate subgoals then solve them in a graph

STaR “Self-taught reasoner” (zelikman…goodman, 2022)

first, finetune on observed \((Q, T, A)\) triplets, where \(T\) is a rationale

then, impute unknown \(T_i\) given dataset of pairs \((Q_i, A_i)\) by sampling until finding a \(T_i\) which leads to the correct answer

zero-shot planning in robotics (huang, abbeel, pathak, & mordatch, 2022)

Prover-Verifier Games improve legibility of LLM outputs (kirchner, chen, … leike, mcaleese, & burda, 2024) - trained strong LMs to produce text that is easy for weak LMs to verify and found that this training also made the text easier for humans to evaluate

self-verification

review on self-verification (pan…wang, 2023)

Self-Refine: Iterative Refinement with Self-Feedback (madaan, …, clark, 2023)

Self-Verification Improves Few-Shot Clinical Information Extraction (gero et al. 2023)

SelfCheckGPT: Zero-Resource Black-Box Hallucination Detection for Generative LLMs (manakul…gales, 2023)

process reward models (openai, 2024) - identify and mitigate intermediate reasoning errors rather than just final answer

1.4.1.4. sampling / efficient inference#

tree-related

Aug-tree (singh, askari, caruana, & gao, 2023)

Tree-prompting (morris, singh, rush, gao, & deng, 2023)

Interpretable-by-Design Text Classification with Iteratively Generated Concept Bottleneck (ludan…callison-burch, 2023)

tree of thoughts (yao et al. 2023) - LLM generates a tree of intermediate answers and perform steps such as backtracking

Graph of Thoughts: Solving Elaborate Problems with LLMs (besta, .., hoefler, 2023) - allows merging/looping in the tree, e.g. for sorting

optimizing cost efficiency

frugalGPT (chen, zaharia, & zou, 2023)

3 components

prompt adaptation - identify effective / shorter prompts (e.g. less demonstrations)

LLM approximation - create simpler/cheaper LLMs

LLM cascade - adaptively choose LLM based on query

train “generation scoring function” - returns reliability score from 0 to 1 for each (question, answer)

router sequentially proceeds through LLM APIs, returning the answer if the reliability score is high enough

frugalML (chen, zaharia, zou, 2020) - tradeoff performance with budget for sequential cascade of API calls for single label

FrugalMCT (chen, zaharia, zou, 2022) - extends to multilabel

EcoAssistant: Using LLM Assistant More Affordably and Accurately (zhang…awadallah, wang, 2023) - answer code-driven queries efficiently using code executor + cascade of increasingly complex LLMs

decoding (basics in HF blog post + docs on slightly more advanced stuff)

greedy - iteratively pick highest-probability token

nucleus sampling: The Curious Case of Neural Text Degeneration (holtzman…choi, 2019)

contrastive decoding (li et al. 2022) - decode based on the difference between a large and small LLM

Context-aware decoding (shi, …zettlemoyer, yih, 2023) - the difference between the output probabilities when a model is used with and without context

DoLa: Decoding by Contrasting Layers Improves Factuality in LLMs (chuang…he, 2023) - contasting later layers with early layers can improve truthfulness

Calibrate Before Use: Improving Few-Shot Performance of LMs (zhao, …, dan klein, sameer singh, 2021) - to make prompting easier, first calibrate output distr by making it uniform when given null inputs, e.g. “N/A”

Minimum Bayes Risk Decoding (suzgun, …, jurafsky, 2022) or (freitag et al. 2022)

A Frustratingly Simple Decoding Method for Neural Text Generation (yang, …, shi, 2023) - build an anti-LM based on previously generated text and use this anti-LM to penalize future generation of what has been generated

Mixture of Inputs: Text Generation Beyond Discrete Token Sampling (zhuang, liu, singh, shang, & gao, 2025) - post-hoc (requires no finetuning), combines discrete tokens into continuous vector

Min-p sampling (nguyen…shwartz-ziv, 2025) - adjusts the sampling threshold based on the model’s confidence by using the top token’s probability as a scaling factor

Min-p, Max Exaggeration: A Critical Analysis of Min-p Sampling in LMs (schaeffer…denisov-blanch, 2025)

Sampling from Your LM One Byte at a Time (hayase, liu, smith, oh, 2025)

Broken Tokens? Your LM can Secretly Handle Non-Canonical Tokenizations (zheng…choi, smith, 2025) - some sequences can be tokenized in different ways (e.g. using character-level tokenizer) – feeding these into a model still generally works

1.4.1.5. prompt chaining / ensembling#

overviews

Ai chains: Transparent and controllable human-ai interaction by chaining LLM prompts (wu, terry, & cai, 2022) - chaining LLM steps together: output of one step becomes the input for the next

interactive system where users can modify chains + their intermediate results – improves performance + human experience

LM Cascades (dohan…sutton, 2022) - treat chaining models as probabilistic programs

use a probabilistic-programming language (PPL) to define a joint probability model on string-valued random variables, parameterized using LMs, and then condition this model on string-valued observations in order to compute a posterior over string-valued unknowns

self-PPLs extend probabilistic graphical models to support more complex joint distributions whose size and “shape” can itself be stochastic

e.g., a graph unrolled for a random number of iterations, until a data-dependent stopping criterion is met

variables are all text: questions \(Q\), answers \(A\), and intermediate thoughts \(T\)

prompt ensembles

liu…neubig, 2023 review discusses different strategies for ensembling prompts, e.g. averaging, weighted averaging

black-box querying

Tree-Prompting (morris…deng, 2023)

PromptBoosting: Black-Box Text Classification with Ten Forward Passes (hou, …, jacob andreas, …, zhang, 2022) - get a small pool of prompts, learn a verbalizer (final classification layer) for each, then ensemble them with AdaBoost on LLM output

people have studied many works on prompt ensembling (e.g. lester et al. 2021)

Boosted Prompt Ensembles for LLMs (pitis…ba, 2023) - similar but use CoT-style prompts and tasks, e.g. GSM8k

PREFER: Prompt Ensemble Learning via Feedback-Reflect-Refine (zhang…cai, 2023) - builds set of prompts dynamically rather than assuming they’re fixed

PTR: Prompt Tuning with Rules for Text Classification (han et al. 2021) – use logic rules to construct prompts with sub-prompts for many-class text classification (prompt is constructed hierarchically, but only one call is made to the LLM for inference)

soft prompts

Learning How to Ask: Querying LMs with Mixtures of Soft Prompts (Qin & Eisner, 2021) - learn a mixture of soft prompts using gradient descent

require model retraining

PRBOOST: Prompt-Based Rule Discovery and Boosting for Interactive Weakly-Supervised Learning (zhang…zhang, 2022) - iteratively (1) select high-error examples, (2) have human label them as rules, and (3) use boosting to train model on the new rules + ensemble

typical rule generation

Snuba (Varma and Ré, 2018) generates heuristics based on a small labeled dataset with pre-defined rule types

TALLOR (Li et al. 2021a) & GLaRA (Zhao et al. 2021) study rule expansion for NER problem based on lexical information and then select rules based on a hand-tuned threshold

Prompt ensembling / selection without labels

Zero-Label Prompt Selection (liao, zheng, & yang, 2022) - use prompts to label unlabeled data and then select prompts using these labels

A Simple Zero-shot Prompt Weighting Technique to Improve Prompt Ensembling in Text-Image Models (alingham…lakshminarayanan, 2023) - use confidence (max output logit) after appropriate normalization as weight

few-shot text classification

FastFit (yehudai & bandel, 2024) - fit few-shot batch with contrastive examples then predict using similarities to shots rather than a classification head (base model is roberta)

SetFit (tunstal…pereg, 2022) - finetune stentence transformer with contrastive loss, then train classification head

Dense Communication between LMs (wu, wang, yao, 2025) - use pre-trained LMs as modules, and pass continuous embeddings between them

train seq2seq models to connect the different small LMs, and get strong performance with very small training cost

1.4.1.6. llm querying / causal inference#

Can LLMs Infer Causation from Correlation? (jin…scholkopf, 2023) - introduce Corr2Cause dataset (must infer causal graph from correlational statements), doesn’t test pre-existing knowledge

Causal Reasoning and LLMs: Opening a New Frontier for Causality (kiciman…tan, 2023)

LLMs to be used alongside existing causal methods, as a proxy for human domain knowledge and to reduce human effort in setting up a causal analysis

cause-effect pairs, LLM has to discover from graph (tubingen benchmark, neuropathic pain, etc.)

Causal Inference in Natural Language Processing: Estimation, Prediction, Interpretation and Beyond (feder…vetich, diyi yang, 2022)

Zero-shot causal learning (nilforoshan…leskovec, 2023)

InferBERT: A Transformer-Based Causal Inference Framework for Enhancing Pharmacovigilance (wang…liu, 2021) - learn + test feature relationships from attention weights

CausaLM: Causal Model Explanation Through Counterfactual LMs (2021) - produce example-level causal model explanations using models finetuned on auxiliary adversarial tasks derived from the causal graph of the problem

Investigating Gender Bias in LMs Using Causal Mediation Analysis (vig, …, shieber, 2020)

Applies causal mediation analysis to identify decisive neurons and attention heads responsible for gender bias in LLMs

Identifies a small handful of decisive attention heads in this case

Amnesic Probing: Behavioral Explanation with Amnesic Counterfactuals (elazar, …, goldberg, 2021) - measure the importance of specific info within a model by introducing a causal intervention to erase that information, then observing the causal effects

TrustLLM (sun…zhao, 2024) - evaluation and benchmark of many aspects of trustworthiness (github)

What Evidence Do LMs Find Convincing? (wan, wallace, & klein, 2024) - rather than relying on facts, LLMs largely rely on textual similarities in evidence to decide whether it’s important

Deductive Closure Training of LMs for Coherence, Accuracy, and Updatability (aykurek…andreas, 2024) - LMs generate additional text implied by documents, reason about the generated text, and finetune on the correct text

LMs’ reasoning capabilities during inference can be leveraged during training to improve their reliability

Causal foundation models

Do-PFN: In-Context Learning for Causal Effect Estimation (robertson…hollman, hutter, scholkopf, 2025)

Black Box Causal Inference: Effect Estimation via Meta Prediction (bynum…cho, ranganath, 2025)

CausalPFN: Amortized Causal Effect Estimation via In-Context Learning (balazadeh…krishnan, 2025)

1.4.1.7. uncertainty#

Semantic Uncertainty (kuhn, gal, & farquhar, 2023) - instead of calculating entropy over tokens, first generate set of answers, then cluster them base on semantic equivalence, before computing entropy

clustering is done via an LM that tests entailment e.g. E.g., “The capital of France is Paris.” entails “Paris is the capital of France.” because they mean the same thing

Can LLMs Express Their Uncertainty? An Empirical Evaluation of Confidence Elicitation in LLMs (xiong…hooi, 2023)

verbalized uncertainty - model outputs its own uncertainty

consistency-based uncertainty - consistency between output generations

Quantifying Uncertainty in Natural Language Explanations of LLMs (tanneru…lakkaraju, 2023)

probing uncertainty (like consistency-based uncertainty above) - applies input perturbations (e.g., paraphrasing) and measure the consistency of the resulting explanations

verbalized uncertainty of explanations often performs poorly

Relying on the Unreliable: The Impact of LMs’ Reluctance to Express Uncertainty (zhou…sap, 2024)

LMs are often unable to express uncertainties

LM confidences tend to be overconfident

users rely heavily on LM generations, whether or not they are marked by certainty

Teaching Models to Express Their Uncertainty in Words (Lin et al., 2022) - GPT3 can generate both an answer and a level of confidence (e.g. “90% confidence”)

Decomposing Uncertainty for LLMs through Input Clarification Ensembling (hou…zhang, 2023)

1.4.1.8. prompt compression / compiling#

Learning How to Ask: Querying LMs with Mixtures of Soft Prompts (Qin & Eisner, 2021) - learn a mixture of soft prompts using gradient descent

liu…neubig, 2023 review discusses different strategies for ensembling prompts, e.g. averaging, weighted averaging

Prompt ensembling / selection without labels

Zero-Label Prompt Selection (liao, zheng, & yang, 2022) - use prompts to label unlabeled data and then select prompts using these labels

A Simple Zero-shot Prompt Weighting Technique to Improve Prompt Ensembling in Text-Image Models (alingham…lakshminarayanan, 2023) - use confidence (max output logit) after appropriate normalization as weight

LLMLingua (jiang, wu…qiu, 2023) - learn BERT-size model to compress prompt (iterative token classification approach from distilled GPT-4 compressed prompts)

LongLLMLingua: Accelerating and Enhancing LLMs in Long Context Scenarios via Prompt Compression (jiang, wu…qiu, 2023)

1.4.1.9. classifier-guided generation#

Plug and Play LMs: A Simple Approach to Controlled Text Generation (dathathri, …, yosinski, & liu, 2020)

gradients from the classifier push the LM’s hidden activations, then recompute logits to guide generation (and maybe avg with original logits to maintain fluency)

FUDGE: Controlled Text Generation With Future Discriminators (yang & klein, 2021)

classifier predicts probability of attribute for running sequence with each next-token appended

these attribute probs. are multiplied with next-token probs for each token and then we sample from that distr (after normalization)

Diffusion-LM Improves Controllable Text Generation (lisa li, thickstun, gulrajani, liang, & hashimoto, 2022) - continuous embeddings

Mixture of Soft Prompts for Controllable Data Generation (chen, lee, …, yu, 2023) - trains a small model on data from a big frozen LLM that is then more controllable

1.4.2. architecture engineering & vetting#

1.4.2.1. architecture/attention variants#

state space models (good overview in albert gu thesis, 2023)

S4: structured state space models (gu…re, 2022) - similar to RNNs but can predict all outputs at once via convolution

the core of the state space model is basically a linear RNN

inputs x, hidden states h, outputs y

3 matrices: \(A, B, C\)

\(y_i = C h_i\)

\(h_i = A h_{i-1} + B x_i\)

note: there is no nonlinearity between hidden states

note: the transition from one hidden state to the next is the same for all positions (except for the input)

can compute hidden states simultaneously by just pre-multiplying these A and B matrices with x the right number of times ( a convolution operation)

mamba: selective state space models (gu & dao, 2023)

changes (2) above – the transition from one hidden state to the next now depends on the input (making it closer to LSTMs)

\(B = B(x)\)

\(C = C(x)\)

RNNs are not Transformers (Yet): The Key Bottleneck on In-context Retrieval (wen, dang, & lyu, 2024) - RNNs fail to retrieve info from long contexts, RAG helps

MAD synthetic tasks: Mechanistic Design and Scaling of Hybrid Architectures (poli…ermon, re, zhang, & massaroli, 2024) - introduces 6 synthetic tasks on which performance correlates very well when scaling to real tasks: in-context recall, fuzzy in-context recall, noisy in-context recall, selective copying, compression, memorization

Scalable MatMul-free LMs (zhu…eshraghian, 2024) - LM architecture that doesn’t use matmuls, builds on GRU, and shows improved efficiency on FPGAs

The Era of 1-bit LLMs: All LLMs are in 1.58 Bits (ma…wei, 2024)

BitNet: Scaling 1-bit Transformers for LLMs (wang…wei, 2023)

Hierarchical Reasoning Model (Sapient; wang…yadkori, 2025) - 4 learnable components: an input network, a low-level recurrent module, a high-level recurrent module, and an output network

Misc

Tree Transformer: Integrating Tree Structures into Self-Attention (wang, .., chen, 2019)

Waveformer: Linear-Time Attention with Forward and Backward Wavelet Transform (zhuang…shang, 2022)

White-Box Transformers via Sparse Rate Reduction: Compression Is All There Is? (yaodong yu…yi ma, 2023)

1.4.2.2. diffusion LMs (DLMs)#

Nice survey here: A Survey on Diffusion LMs (li, chen, guo & shen, 2025)

Continuous modeling - transform discrete text into a continuous latent space, apply a diffusion process and then decode the output back into discrete tex

Diffusion-LM Improves Controllable Text Generation (lisa li, thickstun, gulrajani, liang, & hashimoto, 2022) - fixed set of continuous word vectors are progressively denoised from Gaussian noise

Latent Diffusion for Language Generation (lovelace…weinberger, 2023)

AR-Diffusion: Auto-Regressive Diffusion Model for Text Generation (wu…chen, 2023)

TESS: Text-to-Text Self-Conditioned Simplex Diffusion (mahabadi…cohan, 2023)

Energy-Based Diffusion LMs for Text Generation (xu…leskovec, ermon, & vahdat, 2024)

From Denoising Diffusions to Denoising Markov Models (benton…doucet, 2024)

SEDD: Discrete Diffusion Modeling by Estimating the Ratios of the Data Distribution (lou, meng, & ermon, 2024) - model \(p(\text{altered text}) / p(\text{orig text})\), and make alterations using word swaps at individual locations

Mercury: Ultra-Fast LMs Based on Diffusion (inception labs…ermon, grover, kuleshov, 2025)

Masked modeling

LLaDA: Large Language Diffusion Models (nie, …, li, 2025) - scale to 8B and competitive with LLaMA 3 8B at many tasks

\(t \in (0, 1)\), each token is masked with prob \(t\), and iteratively predicts masked tokens as \(t\) moves from 1 to 0 (simultaneously predicts all masked tokens)

Simple and Effective Masked Diffusion LMs (sahoo…rush, kuleshov, 2024)

LongLLaDA: Unlocking Long Context Capabilities in Diffusion LLMs (liu…qiu, 2025) - adds NTK+RoPE to LLaDA

Dream 7B (ye…kong, 2025)

DiffuLLaMA (gong…jiawei han, kong, 2025) - adapt LM by annealing the causal mask causal mask during training then slowly predicting a masked token’s label rather than the next token (minor point about shifting: still have each head predict the label of the next token rather than the current token, since its more similar to what the original model was trianed for)

Diffusion LMs Can Perform Many Tasks with Scaling and Instruction-Finetuning (ye…quanquan gu, 2023) - adapt LLaMA to DLM via masked LMs, but lose skills during adaptation

Diffusion text embedding models (zhang…zhao, 2025) - finetune DREAM 7B

DreamOn (wu…kong, 2025) - finetune Dream 7B for variable length generation

Diffusion Beats Autoregressive in Data-Constrained Settings (prabhudesai…pathak, 2025)

Accelerating Diffusion LLMs via Adaptive Parallel Decoding (israel, van den broeck, grover, 2025) - dynamically adjusts the number of tokens sampled in parallel using small autoregressive model to help (kind of like opposite of speculative decoding)

DiffuSeq-v2: Bridging Discrete and Continuous Text Spaces for Accelerated Seq2Seq Diffusion Models (gong…kong, 2023) - parallel text generation

Esoteric LMs (sahoo…thickstun, vahdat, 2025) - bridge AR and masked diffusion model (MDM) paradigms + introduce KV-caching for MDMs

Beyond Masked and Unmasked: Discrete Diffusion Models via Partial Masking (chao…krishnan, 2025)

DiffuCoder: Understanding and Improving Masked Diffusion Models for Code Generation (gong…zhang, 2025) - increasing sampling temp. diversifies generation order of tokens

Uniform-state discrete diffusion models: fast, few-step generation but generally outperformed by masked diffusion models

D3PM: Structured Denoising Diffusion Models in Discrete State-Spaces (austin…van den Berg, 2021)

UDLM: Simple Guidance Mechanisms for Discrete Diffusion Models (schif…kuleshov, 2024)

Duo: The Diffusion Duality (sahoo…kuleshov, 2025) - show that uniform-state discrete diffusion models can be built form underlying Gaussian diffusion, yielding faster generation (fewer steps)

applications

PLANNER: Generating Diversified Paragraph via Latent Language Diffusion Model (zhang…jaitly, 2023)

Edit Flows: Flow Matching with Edit Operations (havasi…chen, 2025) - trains flow matching with substitution, insertion, and delete operations to natively handle generative variable-length sequences

Deep Researcher with Test-Time Diffusion (han…pfister, lee, 2025) - not really a diffusion model, just resamples things

DLM reasoning

d1: Scaling Reasoning in Diffusion LLMs via Reinforcement Learning (zhao…grover, 2025)

Beyond Autoregression: Discrete Diffusion for Complex Reasoning and Planning (ye…kong, 2024)

Diffusion of Thoughts: Chain-of-Thought Reasoning in Diffusion LMs (ye…kong, 2024) - diffuse over time steps rather than tokens

Implicit Search via Discrete Diffusion: A Study on Chess (ye…kong, 2025)

Theory

Simplified and Generalized Masked Diffusion for Discrete Data (shi…titsias, 2024)

Flow Straight and Fast: Learning to Generate and Transfer Data with Rectified Flow (liu, gong, & liu, 2022)

Mean Flows for One-step Generative Modeling (geng…kolter, he, 2025)

Fisher Flow Matching for Generative Modeling over Discrete Data (davis…bronstei, bose, 2024)

Slightly related methods

Energy-Based Transformers are Scalable Learners and Thinkers (gladstone…iqbal, 2025) - optimize next-token distribution energy (like minimizing entropy)

1.4.2.3. mixture of experts (MoE) / routing#

mixture of experts models have become popular because of the need for (1) fast speed / low memory at test time while still (2) having a large model during training

note: nowadays often the “experts” are different MLPs following the self-attention layers (since their computations can be computed independently)

A Review of Sparse Expert Models in Deep Learning (fedus, jeff dean, zoph, 2022)

sparsity decouples the parameter count from the compute per example allowing for extremely large, but efficient models

routing algorithm - determines where to send examples

discreteness makes it difficult

some works use RL to learn routing

standard approach uses gumbel-softmax

usually get matrix of similarities between input tokens and experts and route based on these

sometimes route to topk experts rather than top1

load balancing - usually add an auxiliary loss to encourage equal tokens being sent to different experts

non-specialized experts

Early versions (Jacobs, michael jordan, nowlan, & hinton, 1991) had independent feed-forward networks serving as experts

Sparsely-gated MOE layer (Shazeer…quoc le, hinton, dean, 2017) have been studied with token-based routing with backprop

replace FFN in transformers with expert layers

GShard Lepikhin et al. (2021), which appplies this concept to machine translation

Switch transformers (Fedus et al. (2022)) simplifies the architecture to activation of only one expert per layer

BASE Layers Lewis et al. (2021) - find an alternative approach to routing by formulating it as a linear assignment problem

Hash layers Roller et al. (2021) use a fixed hash as the gating function

THOR (zuo, liu…zhao, gao, 2022) - randomly route to different experts then merge at the parameter level at test time

routing notes - make hard decision but still want to learn probabilities

straight-through estimator (STE) - take the argmax during the forward pass, while considering the original probabilities in the backward pass

highly biased

gumbel-softmax- allows for better sampling

specialized experts as fully independent models (sometimes for multi-task learning)

DEmix Layers (Gururangan…smith, zettlemoyer, 2021) – DEMix layers – placed in the feedforward layers of the Transformer – contain experts which specialize on specific domains. Routing at train time is determined only by the domain label, but all experts are activated at inference time and mixed according to weights estimated from a validation set

Sparsely Activated Mixture-of-Experts are Robust Multi-Task Learners (gupta…awadallah, gao, 2022) - use task description to improve routing

Pfeiffer et al. (2022) - multilingual expert model with language-specific routing

task-level MoE Kudugunta et al. (2021) – multi-task expert model with task-specific routing

scaling up

OPT-MOE (artetxe et al. 2021)

AutoMoE (jawahar, mukherjee, liu…gao, 2022)

Towards Understanding Mixture of Experts in Deep Learning (chen…gu, li, 2022)

Interpretable Mixture of Experts (ismail…pfister, 2023) - each sample assigned to single expert for prediction

InterpretCC: Intrinsic User-Centric Interpretability through Global Mixture of Experts (swamy…kaser, 2024) - first, discriminator predicts which features are important. Then, all other features are masked and used for prediction. The discriminator network can additionally select a different network to send different features to

1.4.2.4. pruning / caching#

SparseGPT: Massive LMs Can Be Accurately Pruned in One-Shot (frantar & alistarh, 2023) - prune GPT-style models to atleast 50% sparsity in one-shot, without any retraining, at minimal loss of accuracy

Cramming: Training a LM on a Single GPU in One Day (geiping & goldstein, 2022) - tricks for training BERT

The Unreasonable Ineffectiveness of the Deeper Layers (gromov…roberts, 2025) - use angle similarity to search for which consecutive layers to remove and find that can easily remove large numbers of deep layers

fast decoding

KV caching + some other tricks - if repeatedly using the same tokens at the beginning of the context, can cache the KV vectors for those tokens

KV caching trades off speed with memory

FastGen: Model Tells You What to Discard: Adaptive KV Cache Compression for LLMs (ge…gao, 2024) - for each input prompt, run quick profiling to decide whether to evict things from the KV cache (e.g. attention heads that don’t care about long context, or heads that attend only to punctuation)

speculative decoding (leviathan, kalma, & matias, 2022) - decode multiple tokens in parallel with small model, potentially skipping steps for the large model

early exit - popular way to speed up inference

Multi-exit vision transformer for dynamic inference (Bakhtiarnia, A., Zhang, Q. and Iosifidis, A., 2021)

early layers have large activation map so early exist classifier must be complex

solution: ViT class token allows early-exit classifier to have constant complexity

DeeBERT: Dynamic early exiting for accelerating BERT inference (xin…lin, 2020)

1.4.2.5. adaptation / transfer#

These are transformer-specific. For more general notes, see 📌 transfer learning or 📌 uncertainty. Most of these approaches can be combined with metalearning.

finetuning

finetune all DNN params

finetune linear layer on activations

standard - train linear model on the embedding of the first token (usually an added

[CLS]token) (peters et al. 2018)finetune linear model on all the activations

e.g. evci, et al. 2022 - learn linear layer (using group-lasso) on features extracted from all layers

finetune specific DNN params (e.g. just the bias terms)

Cutting Down on Prompts and Parameters (logan…sameer singh, riedel, 2021) - finetune only the bias terms; works even with null prompts

BitFit: Simple Parameter-efficient Fine-tuning for Transformer-based Masked Language-models (zaken, ravfogel, & goldberg, 2021) - finetune only bias terms

adapter - finetune lightweight layers on top of pre-trained layers (between finetuning all layers, and just finetuning a new layer)

add some new layers and retrain some specific things (all human choices)

side-tuning (zhang, sax…malik, 2020) - train a “side” network that is fused with the pretrained model via summation

Combining Modular Skills in Multitask Learning (ponti, sordoni, bengio, & reddy, 2022) - learn adaptor with disentangled inventory of skills

Text-to-LoRA: Instant Transformer Adaption (charakorn…lange, 2025)

vaguely similar to adapter

LoRA

QLoRA: Efficient Finetuning of Quantized LLMs (dettmers, …, zettlemoyer, 2023)

TOAST (shi, …, darrel, xin wang, 2023) - use top-down attention steering for efficient finetuning

predict a mask

ablate some model weights by training a binary mask over model parameters (Zhao et al., 2020; Radiya-Dixit and Wang, 2020)

predict mask over attention heads

prompting = few-shot learning = priming = in-context learning (starts with GPT)

prompting without changing any model parameters

limitation: can’t exploit sets longer than the training window

MetaICL: Learning to Learn In Context (min et al. 2022) - tune LLM to do in-context learning on a large set of training tasks (few-shot prompting and training time and at test-time)

Visual Prompting via Image Inpainting (bar…darrell, globerson, efros, 2022 )

PatternExploiting Training (PET) – Exploiting Cloze Questions for Few Shot Text Classification and Natural Language Inference (schick & schutze, 2021)

cloze questions - same as masked LMs: task is to replace some missing words

use cloze-question templates (e.g. it was “good” or “bad”) to get soft labels for unlabeled data and then finetune on theses

prompt-tuning (also see next section on autoprompting)

Attentional Mixtures of Soft Prompt Tuning for Parameter-efficient Multi-task Knowledge Sharing

Mixture of Soft Prompts for Controllable Data Generation (chen, … yu, 203) - LLMs as Synthetic Data Generators for Training Smaller Models

long-context adaptation

RoPE: RoFormer: Enhanced Transformer with Rotary Position Embedding (su…liu, 2021)

encodes the absolute position with a rotation matrix

NTK+RoPE (LocalLLaMA reddit post) - unequal interpolation and extrapolation across RoPE dimensions

YaRN (Peng et al., 2023) - categorizes RoPE dimensions into 3frequency-based groups & applies extrapolation, NTK, and linear interpolations, respectively

LongRoPE (ding…yang, 2024)

exploit two forms of non-uniformities in positional interpolation through genertic algo search

progressive extension (first extend to 256k then to 2048k)

readjust on short contexts to preserve original perf

mt-dnn line of work

Multi-Task Deep Neural Networks for Natural Language Understanding (xiaodong liu … gao 2019) - multi-task learning on the 9 glue tasks (first layers are shared, then some task-specific layers at top)

RAdam: On the Variance of the Adaptive Learning Rate and Beyond (liyuan liu…gao, han, 2020)

usually need to do learning-rate warmup when trainin (e.g. with Adam)

RAdam = add a term to rectify the variance of the adaptive learning rate in Adam

SMART: Robust and Efficient Fine-Tuning for Pre-trained Natural LMs through Principled Regularized Optimization (jiang…gao, zhao, 2020)

Smoothness-inducing regularization, which effectively manages the complexity of the model

Bregman proximal point optimization to prevent aggressive updating

Microsoft Toolkit of Multi-Task Deep Neural Networks for Natural Language Understanding (xiaodong liu…gao, 2020)

Posterior Differential Regularization with f-divergence for Improving Model Robustness (hao cheng, …, gao 2021)

regularize model posterior difference between clean + noisy inputs (e.g. adversarially attacked inputs)

comparing different tasks

Task2Vec: Task Embedding for Meta-Learning (achille, …, soatto, perona, 2019) - summarize each task as a vector, by taking diagonal of fisher info matrix (derivative of network output wrt to parameters) - clusters similar tasks

Efficiently Tuned Parameters are Task Embeddings (zhou…mcauley, 2022)

Editing Models with Task Arithmetic (ilharco, ribeiro, …, farhadi, 2022) - task vector is model weights after task finetuning - model weights before finetuning

can use this direction to alter model behavior

Overcoming Catastrophic Forgetting in Zero-Shot Cross-Lingual Generation (vu….constant, 2022) - train with prompts of some (language translation, task) pairs and show that they can generalize to new (language, task) pairs

1.4.2.6. instruction tuning / rlhf / rl#

We taught models to generate, now can we get them to understand?

PASTA: Tell Your Model Where to Attend: Post-hoc Attention Steering for LLMs, PASTA (zhang et al. 2023) - select attention heads to upweight for specific part of the prompt

Model Tells Itself Where to Attend: Faithfulness Meets Automatic Attention Steering (zhang et al. 2024) - rather than user-given prompt upweighting, instead model decides what to upweight

Attention Reveals More Than Tokens: Training-Free Long-Context Reasoning with Attention-guided Retrieval (zhang…jingbo shang, 2025) - see what context tokens get high attention scores during CoT, then explicitly retrieve those and use in new CoT

Instruction Following by Boosting Attention of LLMs (guardierio…wong, 2025) - like PASTA with cheaper profiling

Focus on This, Not That! Steering LLMs with Adaptive Feature Specification (lamb, davies, paren, torr, & pinto, 2025) - add focus instruction tuning, which finetunes LLM specifically to focus on some things while ignoring others

SIMS: Self-Improving Model Steering (zhu…wang, 2025) - generates and refines contrastive samples through iterative self-improvement cycles, enabling adaptive, context-specific steerin

HonestLLaMA = Inference-Time Intervention: Eliciting Truthful Answers from a LM (li…wattenberg, 2023) - observe a full 40% difference between probe accuracy (decoding from activations) and generation accuracy (generating answer throught prompting) on TruthfulQA

step 1 = profiling: identify a sparse set of attention heads with high linear probing accuracy for truthfulness (from small profiling set on truthfulqa)

step 2 = shift activation along these truth-correlated directions at inference time

Discovering Latent Knowledge in LMs Without Supervision (burns, ye, klein, & steinhardt, 2022) - identify whether text is true or false directly from a model’s unlabeled activations

LASER: Improving Reasoning in LMs with Layer-Selective Rank Reduction (sharma…misra, 2023)

Teach Llamas to Talk: Recent Progress in Instruction Tuning (gao blogpost 2023)

RL / RLHF algorithms for LLMs

PPO: Proximal Policy Optimization (schulman et al. 2017)

uses a policy gradient method to update the policy based on the reward from a separate reward model

DPO: Direct Preference Optimization (rafailov…manning, finn, 2023) - simpler technique that eliminates the need for a separate reward model using preference data directly

essentially frames the problem as a classification task between the chosen and rejected responses

GRPO: Group Relative Policy Optimization (deepseek-r1, 2025) - groups similar samples together and compares them as a group (can evaluate them in different ways, e.g. with reward model or function like a code solver)

human feedback

Learning to summarize with human feedback (OpenAI, 2020)

Can LMs learn from explanations in context? (lampinen et al. 2022)

natural language feedback (scheurer et al. 2022) - makes training more efficient

Training LMs with Language Feedback at Scale (scheurer et al. 2023)

Explanation-based Finetuning Makes Models More Robust to Spurious Cues (ludan…callison-burch, 2023)

Post hoc explanations of LMs can improve LMs (krishna…singh, lakkaraju, 2023) - use rationales as corrective signals for LLMs

Show Me How It’s Done: The Role of Explanations in Fine-Tuning LMs (ballout…kuhnberger, 2023)

RLAIF: Scaling Reinforcement Learning from Human Feedback with AI Feedback (lee…rastogi, 2023)

Tuning LMs by Proxy (liu…choi, smith, 2024)

Self-Rewarding LMs (yuan…weston, 2024)

Reinforcement Pre-Training (dong…wei, 2025)

1.4.2.7. test-time training#

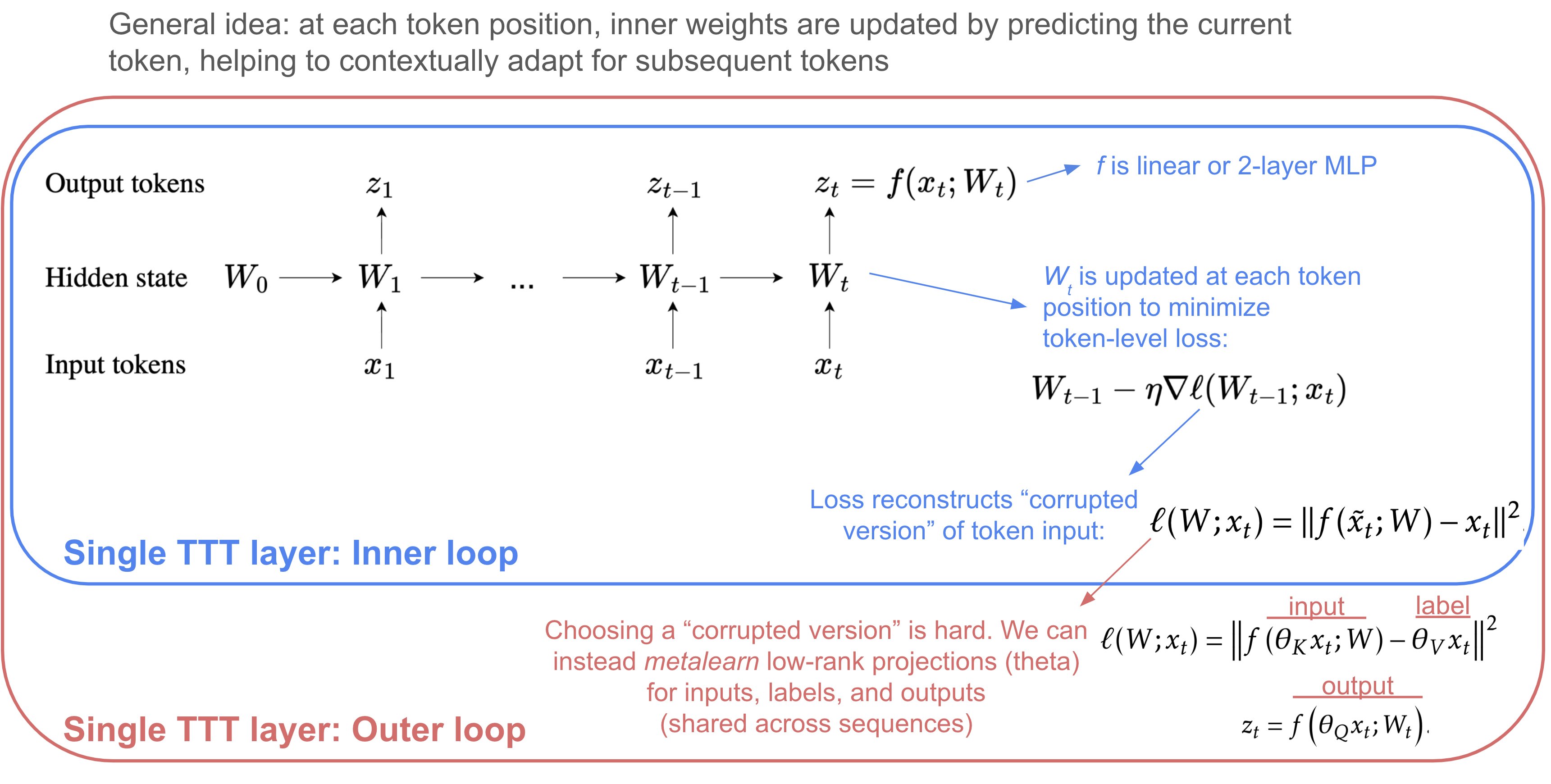

Learning to (Learn at Test Time): RNNs with Expressive Hidden States (sun…guestrin, 2024)

Critique Fine-Tuning: Learning to Critique is More Effective than Learning to Imitate (wang…chen, 2025)

s1: Simple test-time scaling (muennighof…hashimoto, 2025)

1.4.3. (mech) interp#

1.4.3.1. model merging#

Model merging (some of these are non-transformer papers) = combine different models that have the same architecture (see collection of papers here and huggingface blog post here). Also see the review paper Deep Model Fusion: A Survey (li…shen, 2023)

standard methods (see mergekit package)

linear averaging, e.g. model soups (wortsman…schmidt, 2021)

spherical linear interpolation - interpolate angle but keep norm constant

TIES: Resolving Interference When Merging Models (yadav…raffel, bansal, 2023)

only keep top-k% most significant changes in weights

vote on signs of parameters

DARE (yu…li 2023)

randomly reset \(p\) fraction of changed fine-tuned weights to their original values in the base model

rescale remaining changed weights by \(1/(1-p)\)

passthrough/frankenmerging

stack layers to yield model with different size

e.g. depth up-scaling creates a larger model by merging some layers and copying others (solar 10.7B, kim…kim, 2023)

more complex posthoc methods

Learning to Route Among Specialized Experts for Zero-Shot Generalization (muqeeth, …, raffel, 2024) - PHATGOOSE routes to different LoRA model for each token and at each layer

Fisher-Weighted Averaging (matena & raffel, 2022) - merge models with same architecture with particular weights

Git Re-Basin: Merging Models modulo Permutation Symmetries (ainsworth, hayase, & srinivasa, 2022) - permute units of one model to align them with a reference model before merging; supports linear mode connectivity between ResNet models on CIFAR

ZipIt! Merging Models from Different Tasks without Training (stoica…hoffman, 2023) - layerwise merging & don’t merge all the layers

Model Merging by Uncertainty-Based Gradient Matching (adheim…khan, 2023)

UnIVAL: multimodal merging (shukor…cord, 2023)

Multimodal Model Merging (sung…bansal, wang, 2023) - merge a separately trained vision & LM and get a multiomodal model

LoraHub (huang…lin, 2023) - fiven examples from a new task, merge LoRA adaptors

AdaMerging: Adaptive Model Merging for Multi-Task Learning (yang…tao, 2023) - learn coefficients to average models by minimizing entropy on unlabeled test samples

Model Ratatouille: Recycling Diverse Models for Out-of-Distribution Generalization (rame…bottou, lopez-paz, 2022) - finetune many models initially trained on diverse tasks then average their weights

Diverse Weight Averaging for Out-of-Distribution Generalization (rame…cord, 2023)

UltraFuser - 2-stage training with token-level routing to 3 models (ding…sun, 2024)

training paradigms

Branch-Train-Merge: ELMS (Expert LMs) (li…smith, zettlemoyer 2022)

parallel LM of smaller expert LMs

each can be added/removed, ensembled, or parameter-averaged at any time for efficient scaling and rapid customization

improves perplexities, when controlling for training cost

require expert domain specialization

Cluster-Branch-Train-Merge (gururangan…smith, zettlemoyer, 2023) - start by clustering data to do unsupervised domain discovery

LiNeS: Post-training Layer Scaling Prevents Forgetting and Enhances Model Merging (wang…frossard, 2024) - updating deeper layers more than shallow layers helps prevent forgetting across tasks

fit many models into one

superposition of many models into one (cheung…olshausen, 2019) - both during training/testing models are indexed via a high-dim key for each task

supermasks in superposition (wortsman, …, yosinski, farhadi, 2020) - randomly fixed base net + for each task finds subnet that performs well

if task identity not given, correct subnet inferred by minimizing output entropy

non-transformer

snapshot ensembles - average different checkpoints during training (huang et al. 2017)

stochastic weight averaging (izmailov, …, wilson, 2019) - average multiple checkpoints during training

batch ensemble (wen et al. 2020) - have several rank-1 keys that index different weights hidden within one neural net

data-based distillation for model merging (roth…akata, 2024) - can combine multiple models that excel at different classes using data-based distillation

Model Fusion via Optimal Transport (singh & jaggi, 2019) - layer-wise fusion algorithm using optimal transport

Qualitatively characterizing neural network optimization problems (goodfellow, viynals, & saxe, 2014) - linear interpolation experiments on DNNs

1.4.3.2. editing#

Editing is generally very similar to just adaptation/finetuning. One distinction is that it tends to try to keep changes localized, in an effort not to affect performance for most of the model.

Tell Your Model Where to Attend: Post-hoc Attention Steering for LLMs (zhang, singh, liu, liu, yu, gao, zhao, 2023) - upweight attention scores at specific positions to improve LLM controllability

Editing LLMs: Problems, Methods, and Opportunities (yao, …, zhang, 2023)

model-editing = data-efficient alterations to a model

memory-based

SERAC: Memory-Based Model Editing at Scale (mitchell…manning, finn, 2022)

keep track of list of edits in external memory and use them as appropriate context at test time (don’t finetune the model, instead train a smaller simpler model for using the external contexts)

LMs with Editable External Knowledge (li, liu…, neubig, andreas, 2024) - have LLM rewrite and update knowledge base as new docs are added

T-Patcher (Huang et al., 2023) and CaliNET (Dong et al., 2022) introduce extra trainable parameters into the feed- forward module of PLMs

weight updates

Knowledge Neurons in Pretrained Transformers (dai et al. 2021) - integrated gradients wrt to each neuron in BERT, then selectively udpate these neurons

ROME: Locating and Editing Factual Associations in GPT (meng, bau et al. 2022)

localize factual associations - causal intervention for identifying neuron activations that are decisive in a model’s factual predictions

“causal traces” - run net multiple times, introducing corruptions and then restore states from original non-corrupted forward pass to see which states can restore the original results

a small number of states contain info that can flip the model from one state to another

change factual associations - modify feedforward weights to update specific factual associations using Rank-One Model Editing (ROME)

MEMIT: Mass Editing Memory in a Transformer (meng…, bau, 2022)

Aging with GRACE: Lifelong Model Editing with Discrete Key-Value Adapters (hartvigsen, …, palangi, …, ghassemi, 2023)

Flexible Model Interpretability through Natural LM Editing (d’oosterlinck, …, potts, 2023)

Model Editing with Canonical Examples (hewitt, …, liang, manning, 2024)

meta-learning

KnowledgeEditor: Editing Factual Knowledge in LMs (de cao, aziz, & titov, 2021) - train a network that takes in input, output, edit and predicts a weight update to the model

MEND: Fast model editing at scale (mitchell…finn, manning, 2022)

a collection of small auxiliary editing networks that use a single desired input-output pair to edit a pre-trained model

MEND learns to transform the gradient obtained by standard fine-tuning, using a low-rank decomposition of the gradient

REMEDI (hernandez, li, & andreas, 2023) and related activation engineering

get “edit vectors” by obtaining embeddings when passing attributes through LLM

perform edit by by adding linear transformation of edit vector to prompt embedding

then, perform generation with latent embedding

learn linear transformation given a dataset of examples with attributes and desired completions

(also regularize the model to not change too much on other stuff)

Activation Addition: Steering LMs Without Optimization (turner…macdiarmid, 2023)

blog post: activation engineering: Steering GPT-2-XL by adding an activation vector (turner, …, mini, 2023)

obtain “steering vector” by embedding a phrase (e.g. love) and adding that vector to the llm embedding during generation

they only add the embedding for some layers for some tokens

Extracting Latent Steering Vectors from Pretrained LMs (subramani, …, peters, 2022) - find latent vectors via optimization that cause an LLM to output a particular sequence

then, use these vectors to do things like transfer to new tasks / compute textual similarity

Function Vectors in LLMs (todd…wallace, bau, 2023)

In-Context Learning Creates Task Vectors (hendel, geva, & globerson, 2023)

Programming Refusal with Conditional Activation Steering (lee…dhurandhar, 2024)

Improved Representation Steering for LMs (wu, yu, arora, manning, potts, 2025)

PURR: Efficiently Editing LM Hallucinations by Denoising LM Corruptions (chen…sameer singh…kelvin guu, 2023)

new datasets

MQUAKE: Assessing Knowledge Editing in LMs via Multi-Hop Questions (zhong…manning, potts, chen, 2023) - introduces benchmark MQUAKE + method MeLLo, which stores edited facts externally while prompting the LM iteratively to generate answers that are consistent with the edited facts

COUNTERFACT+ benchmark - checks that edits don’t affect existing info

ALMANACS: A Simulatability Benchmark for LM Explainability

model unlearning approaches (see review Rethinking Machine Unlearning for LLMs, liu et al. 2024)

gradient ascent - worsen performance on set of examples to forget

gradient descent - improve performance on examples labeled with hidden info, e.g. response “I don’t know”

localization-informed unlearning, e.g. ROME

influence function-based methods

prompt-based (e.g. only change prompt rather than model parameters)

Offset Unlearning for LLMs (huang…poon, chen , 2024) - unlearning for black-box models by learning the logit offset for contrasting with a smaller model

Steering Out-of-Distribution Generalization with Concept Ablation Fine-Tuning (casademunt…nanda, 2025) - don’t actually modify weights, just ablate concept embeddings during finetuning

1.4.3.3. direct weight inspection#

overviews

Overview of mechanistic interpretability (nanda, 2022+)

review paper (rauker…hadfield-menell, 2023)

A Primer on the Inner Workings of Transformer-based LMs (ferrando et al. 2024)

Representation engineering: A Top-Down Approach to AI Transparency (zou…kolter, hendrycks, 2023)

representation engineering (RepE) - analyzes representations/representation transformations rather than neurons or circuits

basically extends probing to more general tasks, including model control

Transformer visualization via dictionary learning: contextualized embedding as a linear superposition of transformer factors (yun, chen, olshausen, lecun, 2021) - investigate LLM embeddings of different words using dictionary learning

LLMs produce interesting contextualized word embeddings

dictionary elements (of activations across layers) correspond to meaningful things

dictionary element has size \(d\), the embedding size

given list of sentences \(S\), training matrix has size \(\left(\underbrace{\text{num\_layers}}_{\text{12 for BERT}} \cdot \sum_{s \in S} \text{len(s)}\right) \times \underbrace{d}_{\text{768 for BERT}}\)

dictionary coefficient: maps (text, layer, sequence_index) \(\to\) coefficient

extract \(d\)-dimensional embedding for text at specified layer & sequence_index

Neuron-level Interpretation of Deep NLP Models: A Survey (sajjad et al. 2022)

previous works generally use pre-specified concepts, and focus on

concept search - given a neuron find its concept(s)

neuron search - (ii) given a concept find its matching neuron(s)

concept search

visualization, e.g. karpathy, johnson, fei-fei li, 2015 visualize LSTM head response in text

elicit top-k ngram responses on a corpus, which are then labelled manually (kadar et al. 2017)

elicit top-k activating sentences from a corpus, which are then summarized using a parse tree into a synthetic explanation (na…kim, 2019)

limitation: the explanation may be ungrammatical and biased towards something arbitrary (like reptition)

input maximization (e.g. textattack, poerner et al. 2018)

Evaluating Neuron Interpretation Methods of NLP Models (fan…sajjad, 2023) - metric is how well evaluation from one method matches the other ones

A Circuit for Indirect Object Identification in GPT-2 small (wang, …, steinhardt, 2022)

explanation encompasses 26 attention heads grouped into 7 main classes

task: indirect object identification - “When Mary and John went to the store, John gave a drink to _ ” should be “Mary”

circuit

identify all previous names

remove duplicated names

output remaining name

Circuit Component Reuse Across Tasks in Transformer LMs (merullo, eickhoff, & pavlick 2024) - find that the same circuit is used for 2 different tasks: IOI from above and Colored objects (from big-bench)

Sparse Feature Circuits: Discovering and Editing Interpretable Causal Graphs in LMs (marks…belinkov, bau, mueller, 2024)

ex. for biasbios, find circuit and intervene so that it doesn’t rely on gender

Interpretability at Scale: Identifying Causal Mechanisms in Alpaca (wu…, potts, goodman, 2023) - propose boundless DAS and automatically identify a circuit for math

builds on DAS (geiger, …goodman, 2023)

N2G: A Scalable Approach for Quantifying Interpretable Neuron Representations in LLMs (foote, nanda, …, barez, 2023) - explain each neuron in a graph

Finding Skill Neurons in Pre-trained Transformer-based LMs (wang et al. 2022) - some individual neurons are predictive of the final task (dubbed “skill neurons’)

circuits thread (elhage…olah, 2021)

all layers are same dimension and each attention block adds a vector to it

Although they’re parameterized as separate matrices, \(W_O W_V\) and \(W_Q^T W_K\) can always be thought of as individual, low-rank matrices

\(x \in \mathbb R^{d_{embed} \times d_{sequence}}\): \(d_{embed}\) can be hundreds - tens of thousands

\(W_Q, W_K, W_V \in \mathbb R^{d_{attn} \times d_{embed}}\)

\(W_Q^TW_k \in \mathbb R ^{d_{embed} \times d_{embed}}\)

\(W_O \in \mathbb R^{d_{embed} \times d_{attn}}\): projects attention values back to embedding dimention

\(W_O W_V \in \mathbb R ^{d_{embed} \times d_{embed}}\)

\(W_E \in \mathbb R^{d_{embed} \times d_{vocab}}\) embeds initial tokens and \(W_U \in \mathbb R^{d_{vocab} \times d_{embed}}\) undoes the embedding

\(d_{vocab}\) can be very large, e.g. 50k

\(A = \text{softmax}(x^TW_Q^TW_kx) \in \mathbb R^{d_{sequence} \times d_{sequence}}\)

if we have a 0-layer net (e.g. predict next token with linear layer given current token), we just learn bigram log-likelihood

2 circuits

QK circuit determines which “source” token the present “destination” token attends back to and copies information from

\(W_{E}^{T} W_{Q}^{T} W_{K} W_{E} \in \mathbb R ^{d_{vocab} \times d_{vocab}}\)

OV circuit describes what the resulting effect on the “out” predictions for the next token is

\(W_{U} W_{O} W_{V} W_{E} \in \mathbb R ^{d_{vocab} \times d_{vocab}}\)

if a single head increases the probability of both

keep… in mindandkeep… at bay, it must also increase the probability ofkeep… in bayandkeep… at mindinduction heads search previous examples of present token

If they don’t find it, they attend to the first token and do nothing

if they do find it, they then look at the next token and copy it. This allows them to repeat previous sequences of tokens, both exactly and approximately

sometimes can do some kind of “fuzzy” matching

tensor/kronecker product \(\bigotimes\):

Left-right multiplying: Multiplying \(x\) by a tensor product \(A \otimes W\) is equivalent to simultaneously left and right multiplying: \((A \otimes W) x=A x W^{T}\)

When we add them, it is equivalent to adding the results of this multiplication: \(\left(A_{1} \otimes W_{1}+A_{2} \otimes W_{2}\right) x=A_{1} x W_{1}^{T}+A_{2} x W_{2}^{T}\) Softmax Linear Units

replacing activation function with softmax linear unit increases fraction of MLP neurons which are “interpretable”, i.e. correspond to meaningful features

however, may “hide” some non-neuron-aligned features by decreasing their magnitude and then later recovering it with LayerNorm

the presence of nonlinear activation functions createse an incentive for features to align with this basis and not get superposed

if the gains to sparse coding are large enough, this incentive will get overwhelmed

ways to combat polysemanticity

activation sparsity

lateral inhibition / co-occurrence sparsity

weight sparsity

superlinear activation functions

increase neurons per param

\(\text{SoLU}(x) = x \cdot \text{softmax}(x)\)

adds lateral inhibition, superlinearity, approximate sparsity

changes GeLU, which is approximately \(\text{sigmoid}(1.7x) \cdot x\)

just changing to SoLU decrease performance, had to add LayerNorm afterwards

logit lens (2020) - apply unembedding matrix to outputs of each transformer layer

tuned-lens (belrose…steinhardt, 2023) - train linear model for each layer to decode vocab

Analyzing Transformers in Embedding Space (dar, …, berant, 2022) - apply unembeddix matrix to weights, etc. to interpret transformers

Getting More from Less: LLMs are Good Spontaneous Multilingual Learners (zhang…huang, 2024) - applying logit lens finds that model internally translates to english in multilingual tasks

Future Lens: Anticipating Subsequent Tokens from a Single Hidden State (pal…wallace, bau, 2023) - can train linear decoder to decode future tokens from current hidden states

Patchscopes (ghandeharioun…geva, 2023) - decode LLM’s representation of a token by asking another copy of it to decode from that same representation (by repeating)

Do Natural Language Descriptions of Model Activations Convey Privileged Information? (li…wallace, 2025) - this type of method may not really tell us about the activations so much as the inputs

Monitoring Latent World States in LMs with Propositional Probes (feng, russell, & steinhardt, 2024) - identifying a binding subspace in which bound tokens have high similarity (Greg ↔ nurse) but unbound ones do not (Greg̸ ↔ physicist)

How do LMs Bind Entities in Context? (feng & steinhardt, 2023)

In-Context Language Learning: Architectures and Algorithms (akyurek…andreas, 2024) - find evidence for “n-gram heads”, higher-order variants of previously seen “induction heads”

Zoology: Measuring and Improving Recall in Efficient LMs (arora…rudra, & re, 2023) - also find evidence for ngram heads

Does Time Have Its Place? Temporal Heads: Where LMs Recall Time-specific Information (park…kang, 2025)

The Dual-Route Model of Induction (feucht…bau, 2025) - “concept induction heads” - copy entire lexical units rather than individual tokens

Iteration heads (cabannes…charton, kempe, 2024) - when doing CoT for tokens, hypothesized iteration head (which shows up in small transformers trained on custom iterations tasks) implements attending to tokens sequentially and also the preceding CoT token

ICL performance depends primarily on function-vector heads rather than induction heads (yin & steinhardt, 2025)

function-vector headsare a compact representation of a task extracted from specific attention heads, and they can be added to a model’s computation to recover ICL behavior without in-context demonstrations

Retrieval Head Mechanistically Explains Long-Context Factuality (wu…fu, 2024)

A Phase Transition between Positional and Semantic Learning in a Solvable Model of Dot-Product Attention (cui…zdeborova, 2024) - solve 1-layer attention model for histogram task and find phase transition

The Hydra Effect: Emergent Self-repair in LM Computations (mcgrath…legg, 2023) - ablations atone attention layer of an LLM cause another layer to compensate

Neurons in LLMs: Dead, N-gram, Positional (voita, ferrando, & nalmpantis, 2023)

Codebook Features: Sparse and Discrete Interpretability for Neural Networks (tamkin, taufeeque, & goodman, 2023)

Program synthesis via mechanistic interpretability (michaud…tegmark) - condense RNN on simple algorithmic tasks into code

Your Transformer is Secretly Linear (razzhigaev…kuznetsov, 2024) - many transformer layers can be replaced by linear layer

Fine-Tuning Enhances Existing Mechanisms: A Case Study on Entity Tracking (prakash…belinkov, bau, 2024) - finetuning does not seem to change the behavior of circuits, rather just enhances them

Mechanistically analyzing the effects of fine-tuning on procedurally defined tasks (jain…krueger, 2024) - finetuning learns a fairly simple wrapper that can be reversed easily

registers / attention sinks

Vision transformers need registers (darcet…mairal, bojanowski, 2023)

adding extra [reg1], [reg2] tokens that aren’t used at output improve vision transformer performance and attention map interpretability

without these tokens, attention maps are sometimes very noisy, particularly for uninformative tokens

Vision Transformers Don’t Need Trained Registers (jiang, dravid, efros, & gandelsman, 2025) - shifting the high-norm activations from register neurons into an additional untrained token mimics the effect of register tokens without retraining

Efficient Streaming LMs with Attention Sinks (xiao…lewis, 2023) - keep the first four tokens even when using a sliding window on a long context

observation: the first few tokens make up for a shockingly large amount of the attention score, even if the tokens are not semantically important

potential explanation: if the next token to be generated has no match with any of the prior tokens, then the Softmax operation still forces the attention to sum to 1

sun…kolter, liu 2024 demonstrated that “attention sinks” emerge due to previous massive neuron activation

yona…gandelsman, 2025 linked the emergence of “attention sinks” to the inability of LMs to repeatedly generate a single token, and suggested a test-time fix by zeroing out the relevant activated neuron

1.4.3.4. sparse autoencoders (saes)#

early papers

Interpreting and Steering LLMs with Mutual Information-based Explanations on Sparse Autoencoders (wu…liu, 2025) - introduce a penalty in explaining SAE features that mitigates a frequency bias to find diverse and unique words corresponding to an SAE feature

Improving Dictionary Learning with Gated Sparse Autoencoders (rajamanoharan…nanda, 2024)

neuronpedia: visualization tool for neuron SAEs (lin & bloom, 2024)

transformer-debugger using SAEs (openAI)

Automatically Interpreting Millions of Features in LLMs (paulo…belrose, 2024)

Actually do something useful

Resa: Transparent Reasoning Models via SAEs (wang…neiswanger, 2025) - train SAE on reasoning model (with reasoning data), then insert the frozen SAE into a base model and finetune the base model — this is more efficient than simply finetuning the base model

SAEs Are Good for Steering – If You Select the Right Features (arad, mueller, belinkov, 2025) - rather than looking at highly activated input tokens, look at tokens that are output when a feature is amplified, then use those for downstream steering

Sparse Autoencoders for Hypothesis Generation (movva…kleinberg, pierson, 2025) - use natural-language explanations of important SAE features for predicting a target variable [see further discussion in survey paper: Use Sparse Autoencoders to Discover Unknown Concepts, Not to Act on Known Concepts (peng…kleinberg, pierson, garg, 2025)]

sparse autoencoder (sae) critiques

AxBench: Steering LLMs? Even Simple Baselines Outperform Sparse Autoencoders (wu…jurafsky, manning, potts, 2025)

Sparse Autoencoders Can Interpret Randomly Initialized Transformers (heap…aitchison, 2025)

Sparse Autoencoders Trained on the Same Data Learn Different Features (paulo & belrose, 2025)

1.4.3.5. linear representations#

Efficient Estimation of Word Representations in Vector Space (mikolov…dean, 2013) - find linear directions in word embeddings

The Linear Representation Hypothesis and the Geometry of LLMs (park…veitch, 2023) - concepts can be decoded linearly from representations

Not All LM Features Are Linear (engels…tegmark, 2024) - find irreducible multi-dimensional features (e.g. days of the week)

Linear Representations of Sentiment in LLMs (tigges…nanda, 2023) - sentiment is distributed across tokens (not just at sentiment-laden words)

Refusal in LMs Is Mediated by a Single Direction (arditi…nanda, 2024)

LLMs Encode Harmfulness and Refusal Separately (zhao…bau, shi, 2025) - identify harmfulness as a new dimension to analyze safety mechanisms in LLMs, which is encoded internally as a separate concept from refusal.

Convergent Linear Representations of Emergent Misalignment (soligo…nanda, 2025) - different approaches (e.g. mean weight differences vs lora) find different linear directions corresponding to emergent misalignment

some directions correspond to misalignment in a narrow domain, e.g. medicine

Uncovering Meanings of Embeddings via Partial Orthogonality (jiang, aragam, & veitch, 2023)

Emergent Linear Representations in World Models of Self-Supervised Sequence Models (nanda, lee, & wattenberg, 2023)

LEACE: Perfect linear concept erasure in closed form (belrose…biderman, 2023) - a classification task is linearly guarded if and only if every class has exactly the same mean feature vector

Null It Out: Guarding Protected Attributes by Iterative Nullspace Projection (ravfogel…gonen, twiton, goldberg, 2020)

1.4.3.6. universal representations#

Universal Sparse Autoencoders: Interpretable Cross-Model Concept Alignment (thasarathan…derpanis, 2025)

Sparse Crosscoders for Cross-Layer Features and Model Diffing (anthropic blog post, 2024) - learn SAE across different layers of same model

Quantifying Feature Space Universality Across LLMs via Sparse Autoencoders (lan…barez, 2025)

From Tokens to Thoughts: How LLMs and Humans Trade Compression for Meaning (shani, jurafsky, lecun, & shwartz-ziv, 2025)

LLM-derived clusters significantly align with human-defined conceptual categories but only modest alignment with human-perceived fine-grained semantic distinctions

LLMs demonstrate markedly superior information-theoretic efficiency in their conceptual representations compared to human conceptual structures

The Platonic Representation Hypothesis (huh, cheung, wang, & isola, 2024)

vec2vec (jha, zhang, shmatikov, & morris, 2025) - use cyclegan-style approach to translate embeddings from one space to another (without paired samples)

Rosetta Neurons: Mining the Common Units in a Model Zoo (dravid, …, efros, shocher, 2023)

Multimodal Neurons in Pretrained Text-Only Transformers (schwettmann…torralba, 2023)

Interpreting CLIP’s Image Representation via Text-Based Decomposition (gandelsman, efros, & steinhardt, 2023)

Universal Neurons in GPT2 LMs (gurnee…nanda, & bertsimas, 2024) - study the universality of neurons across GPT2 models trained from different initial random seeds

Text-To-Concept (and Back) via Cross-Model Alignment (moayeri…feizi, 2023) - given a new image encoder, if we want to align it to a text encoder, we can just learn a linear transformation from image embeddings to CLIP image embeddings and use the CLIP text encoder

1.4.3.7. debugging / interpretation#

reviews

Rethinking Interpretability in the Era of LLMs (singh, inala, galley, caruana, & gao, 2024)

Because we have LLMs, we Can and Should Pursue Agentic Interpretability (been kim, hewitt, nanda, fiedel, & tafjord, 2025)

Usable XAI: 10 Strategies Towards Exploiting Explainability in the LLM Era (wu…liu, 2024)

TalkToModel: Understanding Machine Learning Models With Open Ended Dialogues (slack…lakkaraju, sameer singh, 2022) - natural language interface to query model (by converting to commands such as filtering the data / calculating importance)

Rethinking Explainability as a Dialogue: A Practitioner’s Perspective (lakkaraju, slack, …, sameer singh, 2022) - interviews with high-stakes users suggest they would like to be able to interact with systems via dialog

AdaTest: Adaptive Testing and Debugging of NLP Models (ribeiro & lundberg, 2022)

goal: easily specify, discover, and fix undesirable behaviors in an NLP model

2-step iterative algorithm

LLM generates many tests targeting the model’s failures

example of a test:

f(“I am a black woman”) ≠ neguser selects and organizes the tests and reprompts the LLM to find more

User fixes the tests (e.g. via finetuning)

Checklist –Beyond Accuracy: Behavioral Testing of NLP models with CheckList (ribeiro…sameer singh, 2020)

matrix of general linguistic capabilities + test types

Fixing Model Bugs with Natural Language Patches (murty, manning, lundberg, & ribeiro 2022)

specify patches with natural language rather than hard rule, allowing them to better handle text

finetune a model to combine original model output with output from a patch-conditioned interpreter head

1.4.3.8. interpretable models#

Backpack LMs (hewit, thickstun, manning, & liang, 2023) - change transformer layers to represent each word

(DirtyCat): Encoding High-Cardinality String Categorical Variables (cerda & varoquax, 2020) - use embedding model to improve string categorical variables

LLMs can Learn Rules (zhu…dai, 2024)

Learning Transformer Programs (friedman, wettig, & chen, 2023) - place strong constraints on transformer architecture that allow it to be written as a RASP program compiled with Tracr

2 contraints

disentangled residual stream - attention head inputs K/Q/V are one-hot, ouputs are concatenated at each layer

each module implements rule-based mapping: attention is onehot

1.4.4. embeddings#

1.4.4.1. embedding models#

detailed overview of info retrieval (bruch, 2024)

Faiss: A library for efficient similarity search (johnson et al 2019) - implement fast approximante nearest neighbor search

introductory blog post on embeddings

basic training pipeline

standard self-supervised pre-training, e.g. BERT

weak unsupervised pre-training, e.g. weakly related text pairs, such as QA pairs from forums like StackExchange and Quora

high-quality contrastive finetuning on curated paired data, e.g. QA from web searches

datasets

Instructor eval: Billboard, Prompt retrieval

FollowIR (weller…soldaini, 2024)

Long contexts: LoCo Benchmark, Jina Long Context Benchmark

Older: BEIR benchmark](https://arxiv.org/abs/2104.08663)

Training

Nomic 235M curated text pairs (mostly filtered from here)

Followed by supervised contrastive fine-tuning on datasets like MSMarco, NQ, NLI, HotpotQA, Fever, WikiAnswers, etc.

MEDI (from Instructor paper): combines 300 datasets from Super- NaturalInstructions with 30 datasets from existing collections designed for embedding training

customization

e.g. add prompt or prefixes like search query, search document, classification, clustering before embedding so model knows how to match things

top-performing models

NV-Embed: Improved Techniques for Training LLMs as Generalist Embedding Models (lee…ping, 2024)

LLM2Vec: LLMs Are Secretly Powerful Text Encoders (behnamghader…reddy, 2024)

Gecko: Versatile Text Embeddings Distilled from LLMs (lee…naim, 2024)

GRIT: Generative Representational Instruction Tuning (meunninghoff…kiela, 2024) - train a single model that, given different instructions, can produce either generations or embeddings

EchoEmbeddings: Repetition Improves LM Embeddings (springer, kotha, fried, neubig, & raghunathan, 2024)